Comcast Business Advances its Enterprise Strategy Through AI-driven Innovation and Ecosystem Expansion

2026 Comcast Business Analyst Conference, Philadelphia, April 15-16, 2026 — A select group of industry analysts gathered at the Comcast Center in Philadelphia to hear from Comcast Business leaders about the progress and success of the unit’s sales and go-to-market strategies. The event continued to center on its theme introduced at last year’s conference, “Everything, Everywhere, All at Once,” reflecting the increasingly complex operating environment customers face and Comcast Business’ role in helping them navigate change through integrated solutions. Building on this theme, Comcast Business emphasized the accelerating pace of innovation over the past year, underscoring advancements in AI and network capabilities as it aims to deliver solutions that keep pace with the speed of business transformation. The event was hosted by NBC News Business and Data Correspondent Brian Cheung and included a State of the Business session with Comcast Business President Edward Zimmermann, a Strategy & Vision session with Comcast Business Chief Product Officer Bob Victor, and an update on Comcast’s network from Chief Network Officer Elad Nafshi. The agenda also featured panel discussions with senior leadership, speaker sessions with Comcast Business customers, and fireside chats with high-profile thought leaders on AI development and trends.

TBR perspective

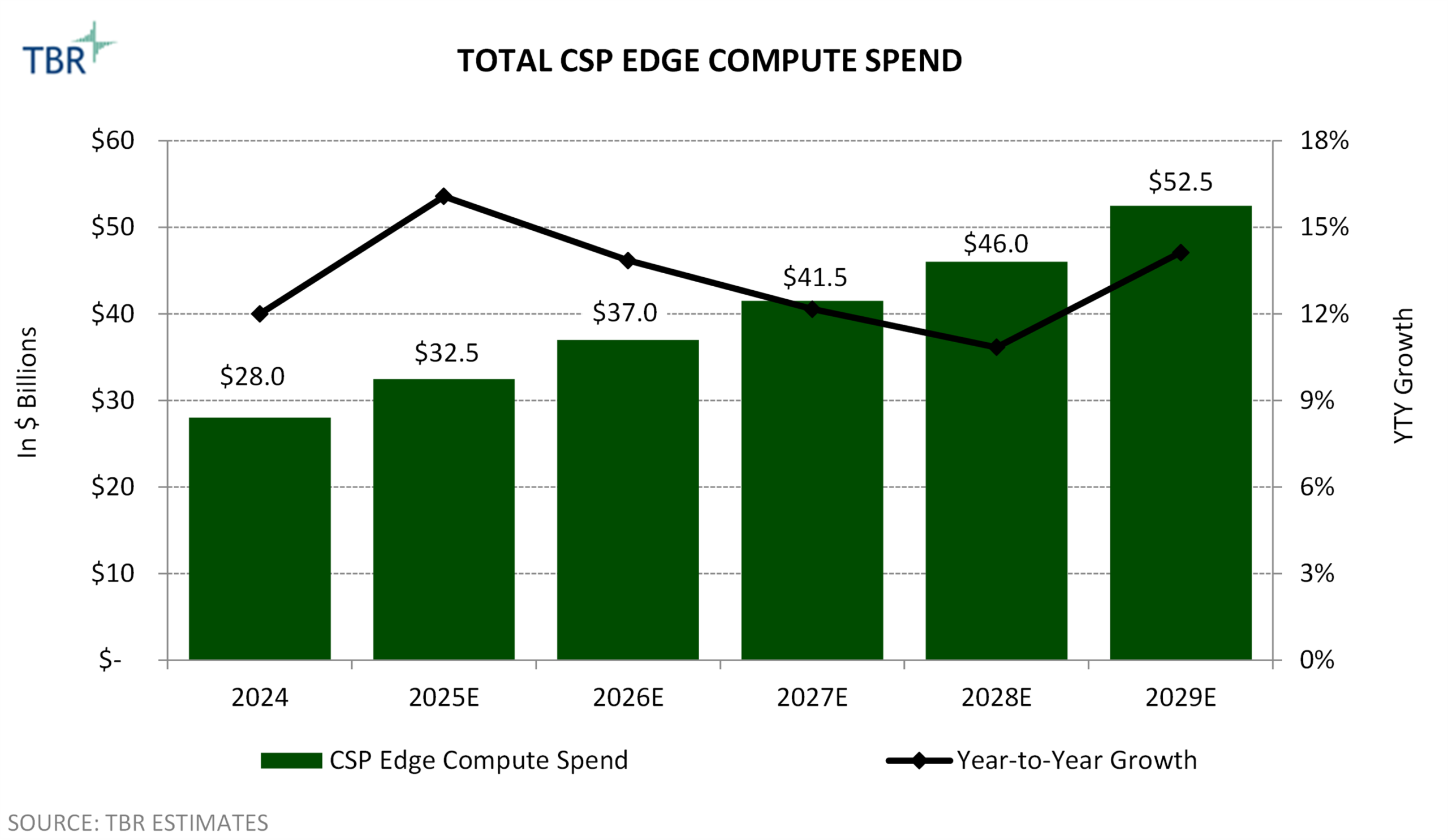

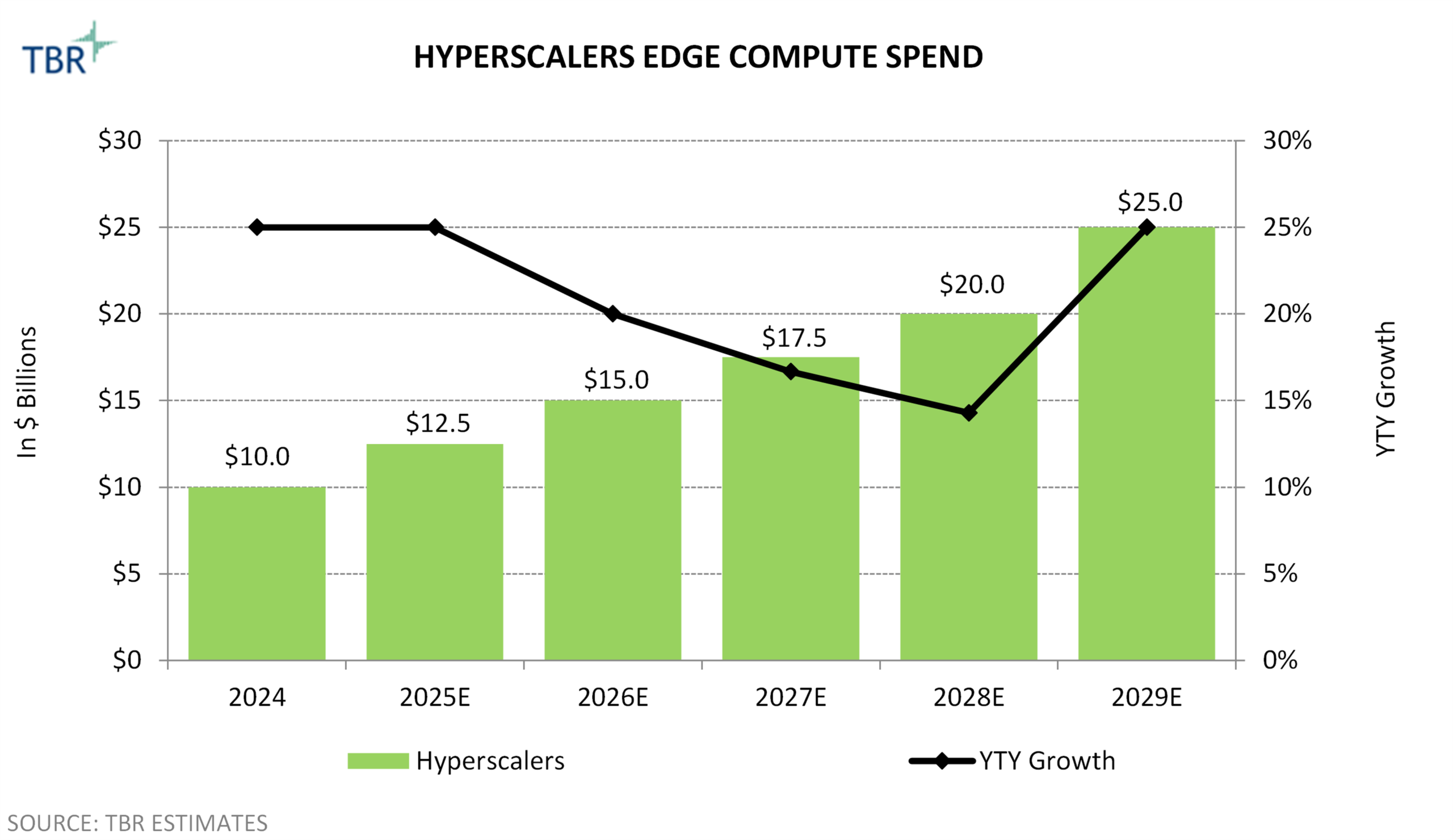

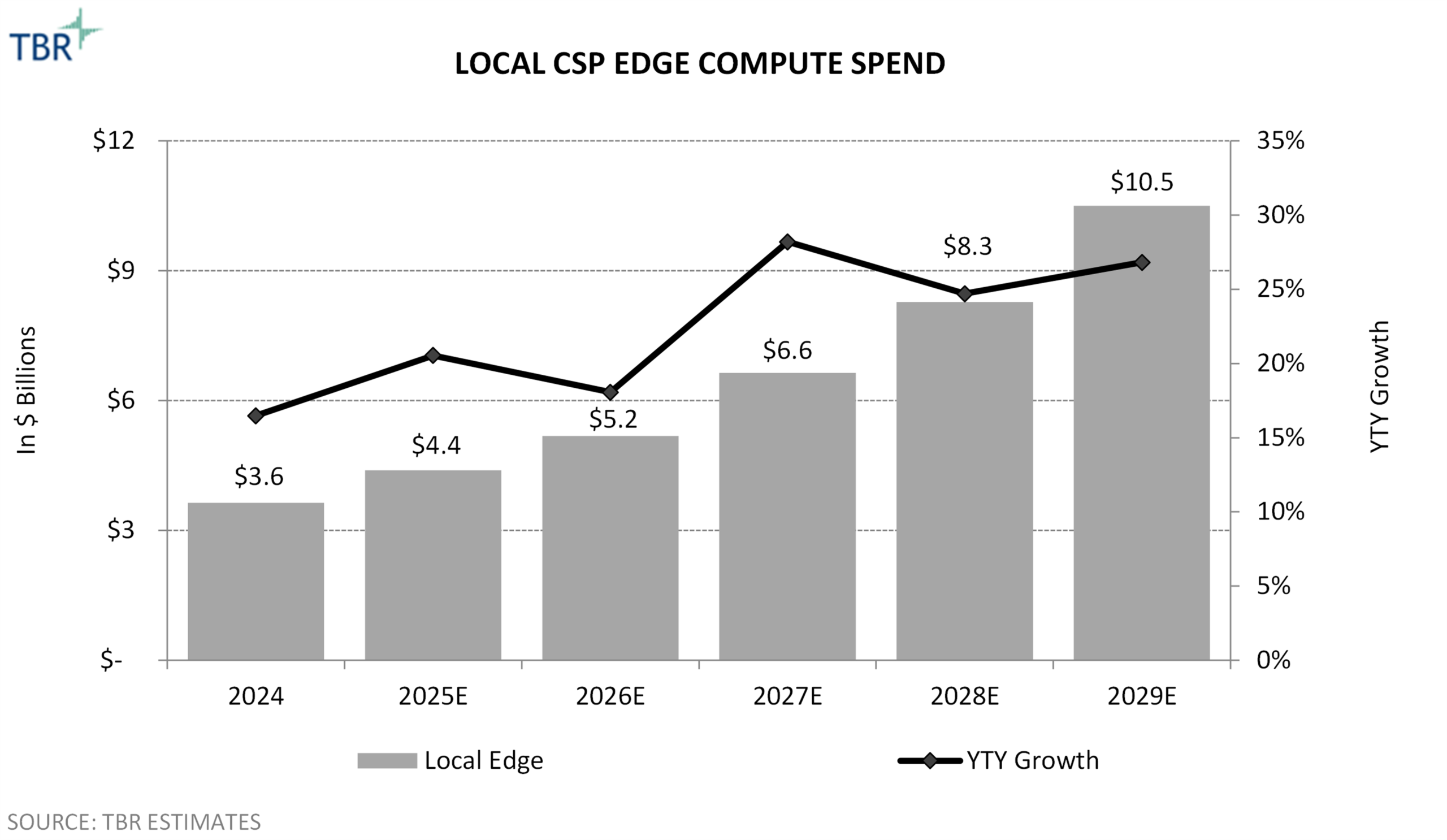

Since 2025, Comcast Business has accelerated its transition from a connectivity-led provider to a solutions- and platform-oriented partner for enterprise customers. The 2026 analyst conference highlighted the company’s focus on expanding share among global enterprises through continued investment in AI-enabled networking, cybersecurity and edge compute capabilities. This evolution reflects both opportunity and necessity. Enterprise growth is increasingly driving overall performance, while the SMB segment faces intensifying pricing pressure from fixed wireless access (FWA) and converged offerings.

At the same time, rapid advancements in AI are reshaping customer requirements, placing greater emphasis on low-latency connectivity, integrated security and real-time data processing. Comcast Business is positioning itself to capitalize on these trends by leveraging its network scale, partner ecosystem and managed services portfolio to deliver differentiated outcomes. However, success will depend on the company’s ability to execute, particularly whether it can monetize AI-driven capabilities and scale its global platform.

Impact and opportunities

Comcast Business drives revenue growth via enterprise expansion, while its SMB segment faces increasing headwinds

Comcast Business’ revenue performance remains relatively strong, generating over $10.2 billion in 2025, exceeding its long-term goal of reaching $10 billion in annual revenue. Growth is increasingly driven by the enterprise segment, which expanded 13.1% in 2025, supported by the integration of acquisitions, such as Masergy and Nitel. Additionally, the company now serves approximately 90% of Fortune 500 companies in some way. Comcast Business is also expanding its focus on multinational enterprises, leveraging partnerships with global operators across more than 130 countries.

Despite this momentum, the SMB segment — the company’s largest revenue contributor — is becoming increasingly challenging. Competition from FWA providers and converged offerings in the U.S. market is intensifying pricing pressure as small businesses gravitate toward lower-cost “good enough” connectivity solutions. These dynamics contributed to a net loss of 48,000 business customer relationships in 2025, compared to a net loss of 16,000 in 2024 and net additions of 17,000 in 2023. TBR believes the majority of these losses occurred within the SMB segment.

To offset customer losses, Comcast Business is increasing its focus on cross-selling value-added services to customers in areas such as mobility, SD-WAN, security and unified communications. For instance, Comcast Business reported that its enterprise customers are spending three times as much for value-added services as on core connectivity services compared to 2023. Comcast Business will also increase wireless revenue from larger businesses in 2026 through its new MVNO agreement with T-Mobile. The agreement covers up to 1,000 lines per account, which will enable Comcast to begin targeting the midmarket with wireless offerings, whereas its existing B2B MVNO agreement with Verizon is limited to 20 lines per account.

Comcast Business scales AI across its portfolio, network and operations

Comcast is expanding its use of AI from targeted, efficiency-driven applications to a more pervasive, embedded role across its network, solutions portfolio and customer engagement model. AI is now integrated across key areas, including network optimization, cybersecurity, sales enablement and customer experience, and is improving operational efficiency through internal use cases such as automated RFP development, deep research and meeting summarization. AI integration is enabling Comcast to automate over 99.7% of software changes across its network, supporting self-healing capabilities that can quickly resolve outages and, over time, help improve customer retention.

Comcast expects AI to not only enhance network and operational efficiencies but also create meaningful revenue-generation opportunities, though the company remains in the early stages of developing monetization strategies. For example, Comcast’s edge computing capabilities support ultra-low latency speeds of less than 1 millisecond for many customers, positioning the company to enable advanced AI-driven applications such as AR/VR, which are more dependent on low latency than text-based use cases. Comcast Business is also exploring customer-facing AI use cases, including small-business concierge agents designed to manage front-desk functions such as greeting customers, scheduling appointments and handling routine inquiries, highlighting the potential to extend AI-driven value beyond internal operations and into customer-facing revenue opportunities.

The launch of Comcast Business Innovation Labs will accelerate the development of enterprise solutions

The company is advancing its enterprise strategy through the formal launch of Comcast Business Innovation Labs, an initiative designed to codevelop and rapidly scale first-to-market solutions for midmarket and enterprise customers. The lab brings together Comcast Business, customers and a broad ecosystem of technology partners to address specific business challenges, reflecting a more demand-driven approach to innovation. A key focus for Comcast Innovation Labs is supporting edge and AI-driven use cases by leveraging Comcast’s network capabilities and partner ecosystem.

Initial programs launched under the Comcast Business Innovation Lab include a partnership with Dell Technologies to deliver managed edge compute for AI and real-time applications and partnering with Digital Realty to enable seamless hybrid and multicloud connectivity through data center fabric services. Comcast Business is also collaborating with Expedient to support three core capabilities: AI operations at scale via Expedient’s Secure AI CTRL services, private cloud as a cost-efficient environment for workloads, and managed disaster recovery to support mission-critical applications.

TBR believes Comcast Innovation Labs strengthens the company’s ability to differentiate through ecosystem-driven innovation and faster solution development cycles, particularly as enterprise customers seek more tailored outcome-based offerings. However, the long-term impact of the initiative will depend on Comcast Business’ ability to scale these solutions beyond pilot environments and integrate them effectively across its broader portfolio and go-to-market strategy.

Conclusion

The 2026 Comcast Business Analyst Conference highlighted the company’s evolution from a connectivity-focused provider to a solutions-oriented partner for enterprise customers. Comcast Business’ ability to surpass $10 billion in annual revenue and sustain double-digit enterprise growth underscores the effectiveness of its upmarket strategy, supported by acquisitions, global partnerships and an expanding portfolio of value-added services.

However, SMB, which accounts for the majority of Comcast Business’ revenue, is becoming increasingly challenging as FWA competition and macroeconomic pressures drive greater pricing sensitivity. These headwinds will require Comcast Business to further strengthen its value proposition to retain and grow its SMB base and combat competitive pressures in the market.