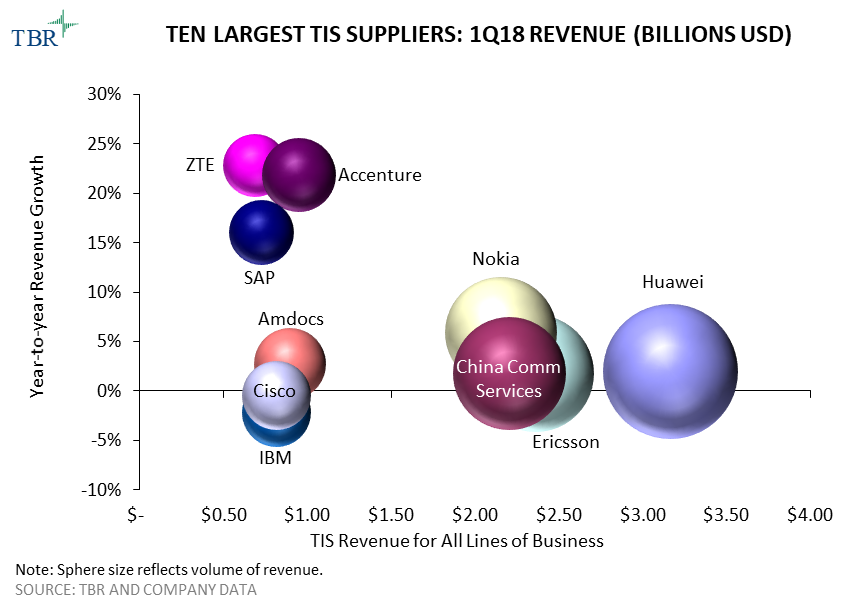

Telecom vendors anticipate revenue from 5G in select countries as early as 2H18, but lower China capex drove market decline in 1Q18

HAMPTON, N.H. (June 29, 2018) — According to Technology Business Research, Inc.’s (TBR) 1Q18 Telecom Vendor Benchmark, the conclusion of LTE coverage projects in China hampered revenue for the largest vendors. A reduction in demand from telecom operators for routing and switching products also caused revenue to decline for Cisco, Juniper and Nokia. In this market downturn, vendors are employing various strategies to maintain margins and mitigate revenue declines while eyeing initial commercial 5G rollouts, which are set to begin in the U.S. in 2H18.

“Suppliers are trying to sell into the IT environments of operators, engaging with the webscale customer segment, and expanding software portfolios to partially offset falling telecom operator capex,” said TBR Telecom Senior Analyst Michael Soper. “The shift in the revenue mix toward software is also helping vendors maintain operating margins in spite of lower hardware volume. Vendors are also growing their use of automation and artificial intelligence in service delivery to improve profitability by reducing their reliance on human resources.”

Western-based vendors are preparing their portfolios to build out 5G for U.S.-based operators in 2H18. Several operators have aggressive 5G rollout timetables and intend to leverage the technology for fixed wireless broadband and/or to support their mobile broadband densification initiatives. Vendors that have high exposure to the U.S. and are well aligned with market trends such as 5G, media convergence and digital transformation will likely increase market share over the next two years as operators in the region are expected to aggressively invest in these areas starting in 2H18.

The Telecom Vendor Benchmark details and compares the initiatives and tracks the revenue and performance of the largest telecom vendors in segments including infrastructure, services and applications and in geographies including the Americas, EMEA and APAC. The report includes information on market leaders, vendor positioning, vendor market share, key deals, acquisitions, alliances, go-to-market strategies and personnel developments.

s

For additional information about this research or to arrange a one-on-one analyst briefing, please contact Dan Demers at +1 603.929.1166 or [email protected].

ABOUT TBR

Technology Business Research, Inc. is a leading independent technology market research and consulting firm specializing in the business and financial analyses of hardware, software, professional services, and telecom vendors and operators. Serving a global clientele, TBR provides timely and actionable market research and business intelligence in a format that is uniquely tailored to clients’ needs. Our analysts are available to address client-specific issues further or information needs on an inquiry or proprietary consulting basis.

TBR has been empowering corporate decision makers since 1996. For more information please visit www.tbri.com.