From Ecosystem to Execution, NVIDIA Shapes How AI Is Built and Run

Orchestrating an event with as many attendees as the company has employees is a feat in and of itself but is fitting for the company at the center of the AI movement. With the market and NVIDIA’s innovation moving at warp speed, what stands out is how each NVIDIA GTC event builds on themes from the prior year while providing a road map for the future of the industry. Inference was the unofficial theme of last year’s event, while GTC 2026 centered on agentic AI.

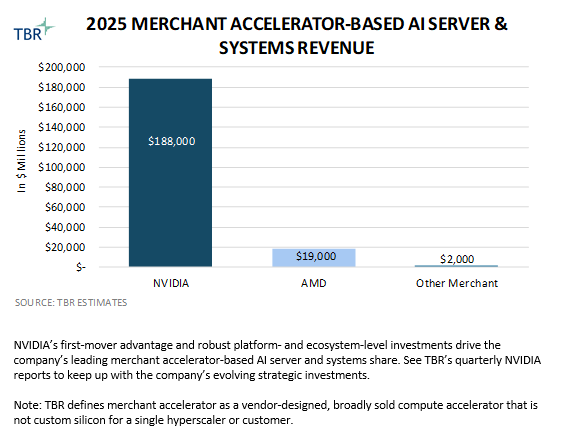

NVIDIA GTC 2026 served as a clear demonstration of the company’s gravity at the center of the AI ecosystem, with 30,000 attendees highlighting arguably the company’s clearest competitive advantage: its robust and growing ecosystem of developers and partners, gained from its first-mover advantage and maintained through constant innovation. As the future of AI workloads places greater emphasis on inference, and with NVIDIA having cemented its stranglehold on frontier model training workloads, the event showcased how the company is leveraging its investments to support the future of agentic AI and physical AI, which are both inference-heavy applications.

NVIDIA sees AI as a five-layer cake, with energy representing the base, and chips, infrastructure, models and applications serving as the other four layers. Although NVIDIA is becoming increasingly vertically integrated through its investments in each layer, as a platform provider the company continues to emphasize horizontal openness, relying on its partners to leverage NVIDIA’s reference architectures, developer resources and solutions frameworks to ultimately deliver AI solutions to end customers.

As AI inference takes hold and agentic AI gains momentum, NVIDIA recognizes that AI systems must evolve to meet the changing demands of tomorrow’s workloads. Previous NVIDIA GPU cycles focused on training larger models and achieving benchmark improvements, but beginning with Grace Blackwell and now Vera Rubin, NVIDIA is shifting the accelerated compute narrative once dominated by the GPU to focus on rack-scale systems, which are becoming the new unit of high-performance AI computation.

From an architectural and design perspective, this transition represents a shift from GPUs optimized for AI training to rack-scale systems optimized to support agentic AI’s always-on inference demands that place a premium on balancing latency, throughput and cost efficiency.

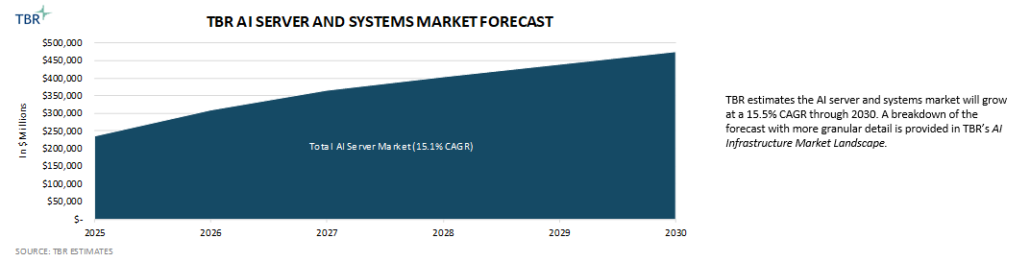

As a result of this fundamental workload change, the economic model of AI is evolving and the key output has moved from model accuracy alone to include the amount of useful work produced, measured in tokens, actions and completed tasks. As such, enterprises are beginning to view AI infrastructure as a production asset rather than a cost center tied to experimentation, and NVIDIA is doubling down on its AI factory narrative, connecting token generation to revenue creation and efficiency to profitability.

In addition to making product announcements at GTC 2026, NVIDIA also demonstrated its coordinated effort to redefine the full AI stack, from silicon and systems to software that supports agentic AI. As such, NVIDIA is shaping not only how AI is built but also how AI is operationalized and monetized.

NVIDIA reframes AI value around efficiency, output and revenue generation

At the core of NVIDIA’s message at GTC 2026 was the shift in performance metrics. In the past, AI infrastructure was judged almost entirely on FLOPs (floating point operations per second) and training speed, but now NVIDIA is pushing an AI narrative centered on performance and tokens per watt, rooted in the fact that AI computing is energy constrained as well as the notion that inferencing — especially agentic AI — will drive tremendous AI compute demand, orders of magnitude larger than anything the industry has ever seen.

Additionally, as a metric, tokens per watt more clearly connects to revenue and cost of revenue in the minds of IT buyers, which is important for NVIDIA to clarify as organizations increasingly architect and adopt agentic AI solutions and seek clearer ROI.

This framing explicitly positions tokens as the output of AI factories — analogous to traditional factories — echoing NVIDIA leadership’s messaging during GTC 2025. The implication of shifting the perception of AI factories from being experimental — and, at best, opex-reducing — to being revenue-generating assets makes AI infrastructure investments more compelling, adding another dimension to infrastructure decision making that prioritizes optimizing cost and efficiency of sustained output over time ahead of maximum peak performance.

Rack-scale systems increasingly define NVIDIA’s AI strategy and infrastructure stack

Although NVIDIA is now framing its generational innovation around rack-scale systems, the company’s GPU improvements continue to play a critical role in driving improved efficiency. For example, compared to Hopper, Blackwell placed greater emphasis on inference and was designed to support mixed workloads and enable higher sustained throughput. Rubin, Rubin Ultra and Feynman will focus on delivering step-function improvements in these areas while maintaining growth in raw training performance.

NVIDIA updated its GPU with Rubin and is also updating its CPU for the first time since 2023, with Vera designed for single-threaded performance in support of agentic workloads. NVIDIA is pairing one Vera CPU with every two Rubin GPUs in Vera Rubin node configurations, but Vera CPUs will also be available on their own, with Meta recently making a large investment in Vera racks. On the compute side, in addition to a new CPU and GPU, NVIDIA is also introducing the language processing unit (LPU) in its upcoming LPX racks to support the most demanding inference workloads, leveraging technology acquired through its December purchase of Groq.

With the addition of the LPU driving higher potential tokens per second output, NVIDIA is effectively making its computing stack, which previously comprised GPUs and CPUs, even more heterogeneous. To enable the interoperability and the most efficient use of Vera Rubin racks augmented by Groq 3 LPX racks, NVIDIA Dynamo — originally announced at GTC 2025 — will be used to disaggregate and intelligently route inference workloads across compute assets.

Adjacent to compute-centric investments, NVIDIA continues to lean into networking — an increasingly critical component of the AI infrastructure stack — to support the growing performance of its systems. At GTC 2025 NVIDIA announced copackaged optics to reduce power consumption, and the company displayed both InfiniBand and Ethernet Photonics switches leveraging the technology during GTC 2026.

Additionally, while NVIDIA continues to use copper as much as possible for scale-up networking, the company noted that to enable massive scale-up with Feyman NVL 1152, it will use optics instead of copper due to increasing physical distances. However, Rubin Ultra NVL 144 scale-up will leverage copper, enabled by NVIDIA’s new Kyber rack architecture, which turns compute trays vertically to blade orientations to reduce the physical distance from node to rack networking spine.

As AI workloads evolve, the locus of innovation is shifting from individual chips to entire systems. NVIDIA’s announcements around Vera Rubin make it clear that rack-scale systems, not just GPUs, are rapidly becoming the new unit of AI computing and the fundamental unit of competition, particularly at the service provider level. This reflects the growing importance of tightly integrated and codesigned compute, networking, storage and cooling.

To support its OEM and ODM partners, NVIDIA continues to update its MGX platforms, comprised of modular reference architectures that give partners an easier and faster way to design their own systems and bring them to market. Additionally, at GTC 2026, NVIDIA announced its Vera Rubin DSX AI Factory reference design and Omniverse DSX Digital Twin Blueprint. By incorporating digital twin capabilities through Omniverse, NVIDIA is enabling customers to simulate and optimize data center configurations before physical deployment to drive efficiencies through design, reduce deployment risk and better show ROI before massive capital is ultimately deployed.

Storage is another critical component of the AI infrastructure stack, especially as inferencing workloads gain momentum. The introduction of NVIDIA’s STX data platform reference architecture extends NVIDIA’s reference architecture-driven strategy by emphasizing modular, scalable storage infrastructure tailored to the demands of agentic AI workloads.

Security and privacy guardrails anchor NVIDIA’s OpenClaw agent strategy

As AI shifts toward agentic systems, the software layer is becoming a more important control point than model weights. The value increasingly sits in the runtime, orchestration, tool use and governance layers that allow agents to reason, act and operate continuously across environments. NVIDIA used GTC 2026 to position itself around that control layer, pairing open-model initiatives with software intended to make autonomous agents more deployable and more manageable.

NVIDIA’s big announcement supporting this was NemoClaw for the OpenClaw platform. NemoClaw installs OpenClaw, Nemotron models and NVIDIA’s new OpenShell runtime in a single command, adding privacy and security controls for always-on autonomous agents. The company described OpenShell as an isolated sandbox with policy-based security, network and privacy guardrails and a privacy router allowing agents to leverage a mix local open models and frontier models in the cloud, essentially solving all the would-be commercial barriers for OpenClaw adoption.

Rather than trying to own every layer of the agent ecosystem outright, NVIDIA is inserting itself at the layer that makes open agents safe and enterprise-ready, understanding that as agentic AI scales, organizations will need tooling for permissions, privacy, sandboxing and hybrid execution. NVIDIA wants that operating layer to sit on top of its own compute platforms and is supporting NemoClaw across RTX PCs, RTX PRO workstations, DGX Station and DGX Spark. Essentially, NVIDIA is trying to make agent infrastructure portable from local systems to larger AI environments while keeping the workload in its hardware orbit.

NVIDIA’s broader model strategy also reinforces this. At GTC 2026 NVIDIA announced its Nemotron Coalition, bringing together Black Forest Labs, Cursor, LangChain, Mistral AI, Perplexity, Reflection AI, Sarvam and Thinking Machines Lab to build open frontier models on DGX Cloud, with the first coalition project set to underpin the upcoming Nemotron 4 large language model (LLM) family. NVIDIA’s pitch is that open models are the foundation for a broader agent ecosystem. However, open does not necessarily mean hardware-neutral, and NVIDIA is trying to ensure that the open agent stack still runs most effectively on NVIDIA infrastructure.

Additionally, NVIDIA is not limiting agents to digital workflows. During GTC 2025 NVIDIA President and CEO Jensen Huang described physical AI as the next frontier of AI. At GTC 2026 NVIDIA positioned physical AI across robotics, automotive, industrial systems and digital twins as the next major phase of agentic AI, implying that the agent stack is expanding from software assistants into systems that can perceive, reason and act in the physical world.

The physical AI-enabling layer is NVIDIA’s simulation-to-deployment stack built on Omniverse, Cosmos, Isaac and GR00T. At GTC 2026 NVIDIA highlighted new Isaac simulation frameworks alongside Cosmos and Isaac GR00T models to support the development, training and deployment of intelligent robots. The company’s Physical AI Data Factory Blueprint aims to standardize and automate data generation, augmentation and evaluation for robotics, vision AI agents and autonomous driving.

In combination, these announcements underscore that physical AI is not a separate category but rather a direct extension of the same agentic AI life cycle, from simulation to training and orchestration to deployment. NIVIDA is building the models, runtime and infrastructure required to move agents from digital environments into real-world systems, with physical AI in this context being the natural progression of the company’s agentic software strategy.

Conclusion

NVIDIA GTC 2026 reinforced that the company is defining not only the pace of AI innovation but also the structure of the AI industry itself. The shift to agentic AI is forcing a rethinking of how compute is designed, deployed and monetized, and NVIDIA is responding with a robust, coordinated strategy that spans silicon, systems, software and models. From tokens-per-watt economics to rack-scale architectures and open agent frameworks, NVIDIA is aligning every layer of its stack to support always-on inference workloads and the emergence of AI as a revenue-generating asset.

At the same time, NVIDIA’s increasing emphasis on physical AI signals that the company’s ambitions extend well beyond digital workloads. By linking its agent software stack with simulation, robotics and autonomous systems, NVIDIA is positioning itself as the foundational platform for both virtual and real-world AI applications. GTC 2025 established the importance of inference, and GTC 2026 clarified that the next phase of AI will be defined by agents, and NVIDIA is building the infrastructure to power them from end to end.

Technology Business Research, Inc.

Technology Business Research, Inc.