Next 2026: Lakehouse and Agentic PaaS Push Google Cloud Closer to the Center of AI Value Creation

Google Cloud’s time has come

A few years ago, one could have argued that Google Cloud — with its advanced engineering and focus on “build versus buy” — was too early to market for enterprise AI. But today, as AI becomes more prominent in cloud discussions, Google Cloud’s days of weaker relative enterprise mindshare are fading. TBR research suggests AI is fueling multicloud adoption, and customers will enlist the right cloud for the right workload, elevating consideration of Google Cloud amid two big market shifts:

- AI consumption expands from embedded to custom

- The PaaS layer evolves beyond model access, incorporating more advanced AI development, orchestration and management, particularly for agentic systems

All hyperscalers tout themselves as “full-stack” to a degree, but Google Cloud’s distinct advantage is that it owns a leading frontier model, Gemini. Having Gemini deeply embedded throughout the portfolio creates a powerful flywheel effect that lets Google Cloud monetize AI in ways others cannot. At the same time, the value is shifting from the AI models themselves to how those models work with a growing set of tools and data to create value. From a repackaged PaaS layer to a revamped data stack, announcements at Google Cloud Next 2026 reinforce that this will be the company’s next chapter.

Everything is being reframed as agentic

One of the big takeaways from Google Cloud Next was how core areas of the portfolio are being repositioned as agentic; Vertex AI evolves into the Gemini Enterprise Agent Platform, Data Cloud morphs into the Agentic Data Cloud, and Workspace Intelligence launches as a feature dedicated to agentic work. In most cases, Google Cloud’s new agentic offerings are not net-new and instead subsume many existing services and capabilities. This is particularly true of Gemini Enterprise Agent Platform, which will be the new home for all Vertex services.

Nonetheless, it underscores a significant shift in where the market is headed and Google’s desire to compete not just in the model access layer via Vertex AI and Model Garden, but also in agent management, development and orchestration as part of a broader platform.

In practice, this same platform (from Vertex AI) still powers the Gemini Enterprise app, which, at over 8 million paid seats across nearly 3,000 companies, is gaining traction as a workplace AI assistant, at least within the Google ecosystem. But from a go-to-market perspective, Google Cloud can now at least deliver one Gemini Enterprise family, inclusive of the Enterprise Agent Platform and the Gemini Enterprise application.

At what point do customers push back on platform sprawl?

Google Cloud’s approach with Gemini Enterprise is notable and may help transition Gemini Enterprise from a “nice to have” productivity tool to the core enabler of a business process. But it also bets that customers are willing to accept yet another platform. Long before ChatGPT, platforms have emerged as critical to digital transformation, offering consistency and reducing integration complexity.

But in the AI/agentic AI era, vendors are quickly launching new platforms for navigating agents and, in doing so, risk repeating the mistakes of the legacy software industry, feeding the very sprawl customers have been trying to avoid. This risk is not specific to Google, and to be clear, Gemini Enterprise Agent Platform is an extension of the existing solution. Time will tell whether customers perceive it as such.

Google elevated its Data Cloud portfolio in a big way

The data lakehouse backstory

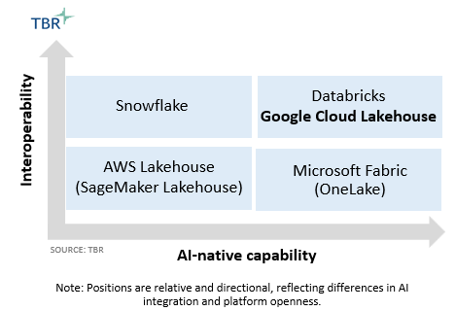

The concept of storing data from different tools in one architecture has quickly become standard practice in the data cloud landscape. All vendors, from analytics leaders like Snowflake and Databricks, to the most siloed hyperscalers, have rallied around the lakehouse architecture to promote it and address the pitfalls of traditional data warehouses. Unlike their lakehouse counterparts, traditional data warehouses or data lakes do not handle both structured and unstructured data side by side, which has become increasingly essential in AI development.

The lakehouse has redefined a core architectural principle and driven the emergence of open table formats like Apache Iceberg, which has since moved to the center of nearly every data cloud vendor’s stack. Apache Iceberg is now widely viewed as the standard for interoperability, offering a shared table layer that ensures the same data is understood regardless of tool or engine. This adds value for customers looking to sidestep some of the data silos reinforced by proprietary platforms, including the hyperscalers themselves.

Google Cloud finally goes true lakehouse

We all know Google Cloud’s strengths at the core data warehouse layer with BigQuery. Enterprise IT decision makers, particularly those in retail, healthcare and financial services, often tell TBR that BigQuery is unrivaled in its ability to handle petabytes of data at scale. Much like its peers, Google Cloud has worked to elevate its data lake positioning over the years, releasing BigQuery-adjacent offerings like BigLake and BigLake tables.

Although these have slowly expanded support for open formats, including Iceberg, proprietary formats still took precedence, raising questions about how “open” Google Cloud’s platform really is. Not only that, but by acting as more of an extension layer for BigQuery, BigLake was never truly an integrated lakehouse platform comparable with the likes of Databricks.

However, this is changing with the launch of Google Cloud Lakehouse, which is an extension of BigLake, but with characteristics that make it a more complete data lakehouse, including what Google Cloud claims is 100% Iceberg compatibility and a natively embedded governance catalog called Knowledge Catalog (formerly Dataplex) that can help manage metadata around Iceberg tables. Traditionally, the cataloging layer has not been a major focus for Google Cloud, but Knowledge Catalog within the Lakehouse is a strong recognition by Google that the value lies not in the format in which data is stored, but in how that data is governed and managed.

Google raises the bar for AWS and Azure on openness … and AI

Google Cloud has always positioned itself as open and leaned into this theme at Next 2026 by reinforcing its commitment to the Apache Iceberg ecosystem. For context, over the last 12 months Google Cloud has tripled the number of customers using Iceberg on Google Cloud Platform (GCP), with those customers collectively managing hundreds of petabytes of data.

However, Google Cloud is not only promoting openness in how data is stored, but also where it is stored. For example, Google Cloud announced Cross-Cloud Lakehouse, a multicloud extension of the Google Cloud Lakehouse architecture that enables customers to work with data stored in both Amazon Web Services (AWS) and Azure.

Though offering multicloud interoperability is not necessarily groundbreaking, given Snowflake and Databricks, Google’s move poses a big test for the other two hyperscalers, which continue to prioritize data control despite what they may be messaging to the market.

This move is an interesting strategic shift for Google Cloud. Data analytics has always been a strong suit for Google Cloud, and the company has been largely defensive of BigQuery for years. But by expanding multicloud support, Google Cloud’s stance becomes less about controlling the data and more about making room for AI.

Put simply, Google Cloud cares less about whether data resides outside GCP, as long as Gemini and other AI tools are used on that data to generate value.

What is next?

Federation does not equal unified control: Google Cloud’s Knowledge Catalog may address the upper-stack governance, but is not a true operational catalog like Databricks Unity Catalog, Snowflake Polaris and AWS Glue, which govern the Iceberg tables for read/write access, rather than focusing solely on higher-level metadata. Google Cloud connects to these external catalogs, but it still falls short of enabling customers to operate across the joint platforms in anything more than a federated manner. It will be interesting to see if Google Cloud moves in this direction and allows governed reads/writes across multiple catalogs as part of a shared platform.

The catalog governs the data, but who governs the catalog? Apache Iceberg may be a trailblazer in enabling shared access across engines, but in doing so, it also introduces a governance problem, as multiple catalogs and compute engines now access the same data. This will undoubtedly create the need for governance across these catalogs, but it is still early days. Vendors that can unify governance across all catalogs and environments might have an advantage. Google Cloud’s evolving strategy suggests it could play a role if it chooses to do so. This could serve as a test of how “open” Google Cloud really wants to be.

Google is building a powerful SI network around Gemini Enterprise

According to TBR’s Cloud Voice of the Partner research, SI and ISV respondents ranked AI and agentic AI as the top technology area that will drive the most channel growth over the next three years. This is certainly not surprising and speaks to the recent efforts from all hyperscalers and major SaaS players to refocus partner programs around AI solutions; as one senior partner sales leader at a hyperscaler aptly put it: “If you are not articulating how GenAI [generative AI] will be used in a project, you’re not even in the conversation.”

That said, amid continued investment in AI with unclear or insufficient ROI, it is no longer enough for service partners to simply attach AI services. Rather, they must demonstrate measurable value and outcomes alongside a clear path to sustainable consumption for their big tech partners.

Google Cloud maintains its focus on an AI-first, partner-enabled growth model and recently announced a $750 million investment in partners, including for training SI partners around the Gemini Enterprise Agent Platform. Google Cloud reports that over 330,000 experts across the SI partner network are trained on Google AI.

For context, two years ago at Google Cloud Next, Google Cloud implied that nine core SIs — Accenture, Capgemini, Cognizant, Deloitte, HCLTech, KPMG, Kyndryl, McKinsey & Co. and PwC — planned to train over 200,000 experts in GenAI. Though we cannot accurately measure growth over the past two years, as the current 330,000 number now encompasses additional partners, including Boston Consulting Group, Infosys and Tata Consultancy Services and likely spans a broader base of personnel, it still speaks to the scale of investment happening.

AI drives business model change and creates misalignment throughout the ecosystem

Even as investments in training increase, ecosystem participants will still need to ensure they are aligned with one another as partners and with customers’ needs. Our Cloud Voice of the Partner research also revealed that, in an alliance, SIs value trust above all else, while IaaS and SaaS providers place greater importance on the flexibility of commercial models and proactive investment.

Ironically, the SIs have been proactive in adopting commercial flexibility, including outcome-based pricing, but their tech partners, especially hyperscalers, have not embraced it, and for good reason given the economics of the IaaS business model.

Even so, vendors continue to tout the importance of outcomes; in fact, Google Cloud recently revamped its partner program to reward partners that can show proof of successful outcomes along with financial contribution. This is just one of the structural mismatches caused by AI in the ecosystem, and it will require parties to realign, especially as AI natives mature as third-party ISVs and become more central to the conversation.

Final Thoughts

Google Cloud Next 2026 did not come off as another series of announcements and showcase of the latest and greatest features. It reflected a more deliberate shift in how Google Cloud aims to disrupt the crowding and fragmented AI market. With Google Cloud Lakehouse, Gemini Enterprise and an overall renewed interest in supporting other hyperscalers and more open standards, Google Cloud is putting the pieces in place to move beyond the AI model layer and compete in areas where enterprise value is increasingly recognized. The degree to which Google Cloud aligns its ecosystem to this strategy will help determine the extent of this disruption.

Getty Images via Canva Pro

Getty Images via Canva Pro