The Fragmentation of AI Infrastructure: 3 Forces Reshaping the Market

The AI infrastructure market is evolving rapidly across 3 core dimensions

It is increasingly clear that the AI infrastructure market is neither unified nor single-dimensional. What began as a relatively cohesive, GPU-driven infrastructure build-out is rapidly diverging along three structurally different yet interrelated axes:

- Training versus inference

- GPUs versus custom AI ASICs (application-specific integrated circuits)

- Cloud versus on-premises deployments

Together, these dynamics represent fundamental shifts in how AI servers and systems are architected, deployed and monetized, impacting the entire AI ecosystem.

As these divides deepen, the AI infrastructure market is effectively splitting into distinct segments with different buyers, economics and competitive dynamics. Vendors and customers that continue to treat AI infrastructure as a single, homogeneous market risk misallocating capital, overestimating growth opportunities and underestimating emerging competitive threats.

Training versus inference

Perhaps the most important divide in the AI infrastructure market is between training and inference workloads. Training prioritizes flexibility, scalability and rapid iteration, reinforcing GPUs as the foundation for frontier model development. Inference, however, operates under different constraints, where cost efficiency, power consumption and throughput take precedence, especially for hyperscalers. Flexibility remains important across more variable enterprise and heterogeneous workloads. This inference dynamic is driving hyperscalers to increasingly deploy custom AI ASICs optimized for cost per inference and energy efficiency.

TBR sees this shift as a reflection of a broader change in where value is created rather than an indication of a transition from one architecture to another. While training has driven initial infrastructure build-outs, inference represents the larger long-term opportunity as AI adoption expands across industries. As a result, the center of gravity in AI infrastructure is shifting toward production inference workloads, where efficiency and scale define competitiveness at the hyperscaler level, even as flexibility remains a key requirement across the broader market.

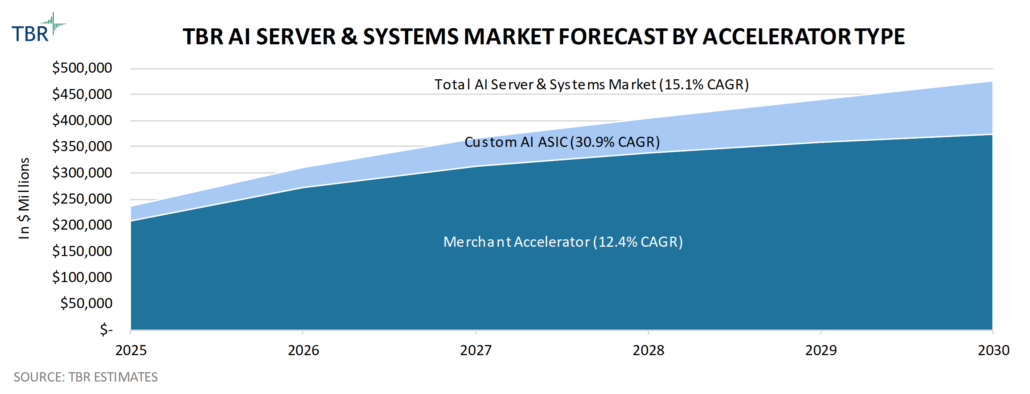

TBR forecasts the total AI server and systems market will eclipse $300 billion in 2026, growing at a rate north of 30% year-to-year, driven primarily by large-scale service provider AI infrastructure build-outs.

GPUs versus ASICs

The divergence between GPUs and custom AI ASICs reflects how different ecosystem players are positioning to capture this growing inference opportunity. Hyperscalers are investing in custom silicon to optimize performance, reduce costs and align infrastructure with large-scale, stable workloads, while also abstracting infrastructure through managed services to consolidate control higher up the stack.

At the same time, merchant accelerator vendors, like Advanced Micro Devices (AMD) and NVIDIA, are not ceding the inference opportunity to hyperscalers and their custom AI ASICs. Instead, they are investing aggressively to bolster their platform-level capabilities through tightly integrated hardware and software stacks, managed infrastructure offerings and next-generation systems optimized for tokens-per-watt efficiency. Competition shifts from a hardware-centric model to a platform-level battle, where control over how AI infrastructure is delivered, consumed and monetized increasingly determines value capture and directly influences deployment models.

Cloud versus on-premises deployments

While hyperscalers remain the largest demand vector behind the growing AI infrastructure market, the idea that AI workloads will be fully centralized in the cloud is already beginning to break down. Enterprises are encountering practical constraints, including data sovereignty requirements, data gravity, latency sensitivity and cost considerations, that are driving a more distributed deployment model.

At the same time, many organizations continue to face challenges related to data readiness and infrastructure complexity, which slow large-scale enterprise AI adoption and reinforce the need for hybrid approaches. As a result, TBR sees organizations deploying AI infrastructure across a mix of cloud, on-premises and edge environments — with that mix dictated by industry group and company size — rather than converging on a single deployment model.

This dynamic echoes previous technology cycles, in which organizations choose hybrid cloud architectures rather than centralizing all workloads in public or private cloud environments. However, TBR believes AI will create an even more fragmented and distributed infrastructure landscape, where deployment decisions are more closely tied to workload-specific requirements.

Implications of AI infrastructure market fragmentation

As these structural divides take hold, vendors are already being forced to make strategic trade-offs.

OEM strategies, for example, are diverging between high-volume, lower-margin deals with services providers and more targeted, higher-margin enterprise opportunities that emphasize integrated solutions and services. TBR views this divergence as a reflection of the broader realities that there is no single, unified go-to-market strategy for AI infrastructure and that demand and adoption by customer group is uneven. As such, vendors must align their current portfolios and alliance and investment strategies with specific market segments to optimize value capture rather than attempting to compete across all fronts simultaneously.

Upstream of the OEMs, NVIDIA’s near-monopoly position in the AI infrastructure market is gradually receding as hyperscaler AI ASICs and other merchant accelerators vie for their place in the AI data center. AMD’s investments in developing rack-scale integrated systems and emphasis on ecosystem openness directly compete with NVIDIA in the merchant accelerator space, while hyperscaler AI ASICs pose an adjacent threat for share of the inference market. Peripherally, other vendors are also entering the merchant market with processors and systems architectures purpose-built for specific inference applications.

Winners will align to the right fragment — not the entire market

As fragmentation accelerates, competitive positioning will increasingly depend on market segment alignment.

- Hyperscalers will continue investing in the consolidation of control through infrastructure abstraction and the deployment of custom AI ASIC-based servers and systems.

- Merchant silicon vendors will reinforce their dominance in training and relevance in inference through platform- and ecosystem-level investments.

- OEMs will increasingly lean into their respective services-led, enterprise-focused models as demand diversifies beyond services providers.

- Enterprises will adopt hybrid AI strategies that balance cost, control and flexibility as a growing number of industry-specific use cases become better defined.

In this environment, there is no single winner across AI infrastructure. Instead, leadership will be defined within each segment, and success will depend on how effectively vendors align their strategies with the underlying structure of the rapidly evolving market.

Conclusion

Understanding how AI infrastructure is fragmenting — and where value is shifting as a result — is critical for forecasting demand, evaluating competitive positioning and aligning long-term strategy.

TBR’s AI Infrastructure Market Landscape provides a detailed analysis of these dynamics, including vendor performance, ecosystem developments and evolving market opportunities across the AI infrastructure stack. Preview the data and analysis in our latest AI Infrastructure Market Landscape.

Getty Images via Canva Pro

Getty Images via Canva Pro

Technology Business Research, Inc.

Technology Business Research, Inc. Technology Business Research, Inc.

Technology Business Research, Inc.