5 Ways Neocloud Will Disrupt the Original Cloud Disruptors

Neocloud providers challenge a decade of hyperscaler control

Before the explosion of AI interest in 2023, the hyperscalers had enjoyed a nearly unimpeded growth trajectory since 2017, when the last of the telco cloud competitors exited the business. Verizon, IBM, Rackspace and a multitude of others all started their own cloud platforms in a bid to challenge Amazon Web Services (AWS) in the cloud infrastructure space but ultimately exited the space and focused on the periphery of the cloud market opportunity. Even the challenges posed by General Data Protection Regulation (GDPR) and other regulations were ultimately not enough to dislodge the major cloud providers, as the three leading U.S.-based firms still hold the largest market share, even in the heavily regulated European Union markets.

More than a decade after the market came into existence, the cloud infrastructure market remains a steady source of double-digit growth, with a total opportunity size of roughly $500 billion globally in 2025. Most of this market opportunity is claimed by AWS, Microsoft and Google Cloud, which together account for half of the total market. Although we expect the collective share of those three vendors to continue growing through 2029, neocloud providers will cause real disruption and limit the leading hyperscale cloud providers’ nearly unimpeded ability to expand. Although neocloud providers will not realistically capture leadership of the cloud infrastructure space, they will disrupt the hyperscaler market by capturing AI-related market growth, pressuring pricing for AI workloads, building a platform ecosystem around their services, forcing hyperscalers to partner with major neocloud providers, and directly targeting enterprise customers.

It is not just the revenue but also the growth that hyperscalers will miss

The most direct and visible impact of neocloud providers will be the revenue they generate. The neocloud market is estimated to have surpassed $25 billion in 2025, representing triple-digit growth year-to-year. That presents a significant opportunity and represents one of the fastest-growing segments of the overall cloud market. However, there is overlap, as many hyperscalers are also the largest neocloud customers, and the fact that this group of companies is capturing tens of billions of dollars and growing at a rapid pace is a complicating factor in the market. CoreWeave, for instance, earns nearly $2 billion in revenue each quarter, a figure that is roughly doubling year-to-year.

Neocloud providers will also pressure hyperscale pricing and margins

CoreWeave’s $2 billion in quarterly revenue is even more significant given the pricing advantages it offers its customers. Although the specifics vary based on a number of factors, in general, neocloud providers’ prices are 30% to 60% lower than what the major hyperscalers charge for the same services. That means CoreWeave is taking between $2.6 billion and $3.2 billion in market opportunity off the table from hyperscalers. The total revenue impact is even more severe after accounting for the pricing pressure those hyperscalers are forced to grapple with while trying to minimize the disparity between their AI service prices and neocloud offerings.

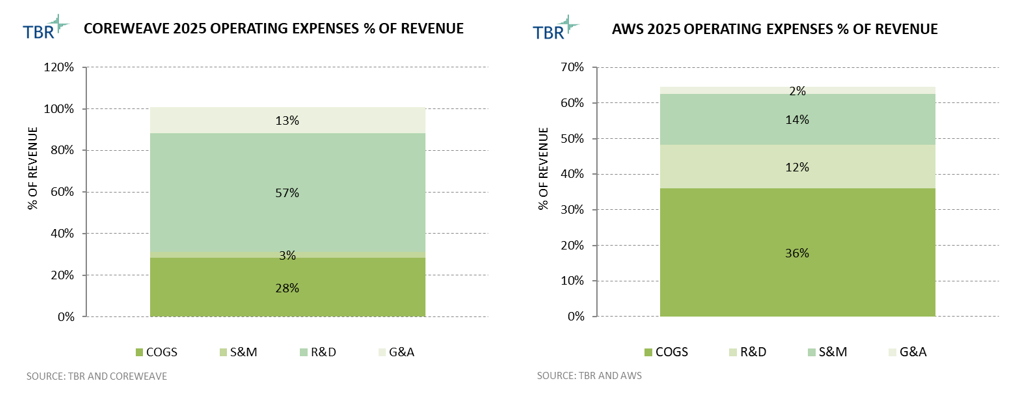

Hyperscalers’ expenses and margins will also reflect the impact of neocloud providers, as their operating models vary significantly, as shown in Figure 1. CoreWeave, for instance, is operating at basically a break-even profit level, investing internally, largely through R&D and infrastructure build-outs. Neoclouds’ increased pricing and investment pressures will also impact AWS’ double-digit operating margins.

Figure 1: 2025 Operating Expenses as a Percentage of Revenue for CoreWeave and Amazon Web Services (Source: TBR)

Building out a platform will solidify neocloud competitive positioning

While selling access to the raw GPU capacity remains the primary way in which neoclouds are disrupting the hyperscale landscape, expanding into the platform layer of services will have a sustained impact. As GPU supply expands and competition intensifies, platforms have become critical for differentiation, customer retention and higher-margin services. This is a strategy straight out of the cloud hyperscaler playbook, as AWS, Microsoft and Google have entrenched themselves with customers through additional development, integration, marketplace and data services on top of their core infrastructure capabilities. Neoclouds, by layering orchestration, AI tooling and developer environments on top of specialized infrastructure, aim to move up the value chain and compete more directly with hyperscaler AI environments.

CoreWeave provides one of the clearest examples of this platform strategy. The company now positions its offering as a purpose-built AI cloud platform that combines high-performance infrastructure with intelligent software tools. The platform integrates services such as managed Kubernetes environments, GPU-native scheduling systems and AI storage layers designed to support large distributed training workloads. For example, the CoreWeave Kubernetes Service (CKS) and Slurm-on-Kubernetes orchestration stack allow customers to efficiently schedule massive GPU jobs and maximize cluster utilization.

Beyond orchestration, CoreWeave has expanded into tools that support the broader AI life cycle, including training infrastructure, inference deployment and integrations with AI development ecosystems. The company’s platform is designed to enable developers to build, train and serve AI models within a single environment, rather than relying on fragmented tooling across multiple providers. These additional services target some of the fastest-growing addressable markets for hyperscalers, large-scale AI training and inference. The impact becomes more distinct in the long term, as customer adoption of platforms is quite sticky, preserving neocloud advantages even as GPU scarcity and price-to-performance advantages potentially fade over time.

Hyperscalers are forced not only to compete with neoclouds but also to partner and purchase from them

Although there is a competitive element between hyperscalers and neoclouds, both groups also rely on each other to capitalize on the AI market opportunity. This reflects the explosive demand for AI compute and the limits of hyperscalers’ ability to build capacity quickly enough to meet that demand. Neocloud providers such as CoreWeave have built their businesses around specialized AI infrastructure and have an inherent advantage in the space due to their unique relationships with NVIDIA, which allow preferential access to supply-constrained GPUs. As demand for generative AI accelerates, these specialized environments have become valuable sources of additional compute capacity — even for the largest cloud providers, leading to a paradoxical relationship between hyperscalers and neoclouds.

On one hand, hyperscalers must compete with neoclouds for AI customers, particularly startups and AI labs seeking large GPU clusters at competitive prices. On the other hand, hyperscalers also benefit from accessing the infrastructure that neoclouds have rapidly built. In some cases, hyperscalers purchase compute capacity from neocloud providers to supplement their own data center supply while their internal AI infrastructure continues to scale.

This hybrid competitive and cooperative dynamic reflects a broader shift in the cloud market. AI infrastructure demand has grown so quickly that no single provider can fully control the supply of compute resources. As a result, the emerging AI cloud ecosystem is becoming more interconnected, with hyperscalers, neoclouds and infrastructure providers operating within a complex web of competition and collaboration. In the near term, this dynamic is likely to persist, limiting the aggressiveness with which neoclouds and hyperscalers compete.

Expanding to enterprise customers is the next stage of neocloud diversification

Although platform services and capabilities represent neoclouds’ functional diversification, enterprise customers represent diversification efforts within the neocloud customer base. The supply-constrained environment and tremendous amounts of investment made AI startups and hyperscalers lucrative early customers for neocloud providers. That concentrated customer base has created a significant market very quickly, but expansion and diversification represent the next phases in the market’s evolution. Given the areas of competition with hyperscalers, it is particularly important to expand the customer base to large enterprises that build and run their own AI models. To expand into enterprise customer accounts, neocloud providers are combining specialized AI infrastructure with enterprise-grade platforms and long-term capacity agreements that appeal to organizations deploying large-scale AI workloads.

For instance, in addition to providing GPU-dense infrastructure optimized for AI training and inference and large clusters of NVIDIA accelerators connected through high-bandwidth networking, CoreWeave is also targeting enterprise customers by building a platform around AI workload management and deployment. The company’s managed Kubernetes environments and GPU-aware scheduling capabilities allow enterprises to run large distributed training workloads more efficiently. Integrations with AI development tools and machine learning frameworks enable customers to build, train and deploy models within a unified environment rather than assembling multiple infrastructure and software layers independently. CoreWeave has also pursued enterprise adoption through large, multiyear compute agreements that give customers guaranteed GPU capacity while ensuring predictable revenue streams for the company. Although neoclouds’ efforts to diversify their customer bases are still new, the trend will eventually lead to greater stability for the providers as the AI and GPU markets mature.

Stefan Ionita, Canva Pro

Stefan Ionita, Canva Pro JLGutierrez, Getty Images via Canva Pro

JLGutierrez, Getty Images via Canva Pro

Technology Business Research, Inc.

Technology Business Research, Inc. Getty Images via Canva Pro

Getty Images via Canva Pro Getty Images, via Canva Pro

Getty Images, via Canva Pro Technology Business Research, Inc.

Technology Business Research, Inc. Technology Business Research, Inc.

Technology Business Research, Inc.