AI & GenAI Market Landscape

TBR Spotlight Reports represent an excerpt of TBR’s full subscription research. Full reports and the complete data sets that underpin benchmarks, market forecasts and ecosystem reports are available as part of TBR’s subscription service. Click here to receive all new Spotlight Reports in your inbox.

Updated: March 2026

AI adoption continues to reshape the alliances ecosystem, strengthening the case for multiparty alliances

Alliance ecosystems are restructuring around agentic AI capabilities and IT services companies, and consultancies must build region-specific AI ecosystem strategies that rely on hyperscalers, startups and IP-led frameworks.

Agentic AI strengthens the hyperscaler-SI relationship while also increasing competitive tension as hyperscalers embed more autonomous capabilities into their own platforms.

DXC Technology (DXC) intensified its reliance on hyperscaler alliances and added AI-specific partners to compensate for limited internal R&D capacity, deepening relationships with AWS, Microsoft Azure and Google Cloud to scale cloud and AI delivery. DXC also increased use of AI partnerships (e.g., ServiceNow) to accelerate AI-focused Fast Track solutions and introduced DXC Complete, simplifying SAP cloud migrations to Azure while embedding AI-enabled managed services.

HCLTech deliberately shifted from a technology-agnostic partner posture toward named, best-of-breed AI partnerships, tightly coordinated with acquisitions and industry strategy, and expanded partnerships with hyperscalers, Databricks and Snowflake, complemented by startups and academic institutions. HCLTech’s alliances are now structured to codevelop and cosell AI-enabled industry solutions, rather than only supporting horizontal services, and the company launched at least eight AI-enabled solutions aligned with ecosystem partners, including InsightGen (AWS-based) for financial services.

Kyndryl expanded from bilateral partnerships to orchestrated, multiparty alliances that combine AI, infrastructure and industry expertise, partnering with Hewlett Packard Enterprise (HPE) and NVIDIA to deliver HPE Private Cloud AI, an enterprise AI factory solution. In addition, Kyndryl strengthened its Microsoft alliance to enable AI-driven service delivery and automated data protection.

Microsoft broadened its alliance strategy beyond OpenAI-centric AI to a multimodel, governance-first ecosystem. For example, Microsoft partnered with Workday to deliver unified AI-agent governance across Azure AI Foundry, Copilot Studio and Workday systems of record, and expanded partnerships with Anthropic and NVIDIA, adding Claude models to Azure and securing GPU capacity.

Hyperscaler AI revenue is expanding rapidly as OpenAI and other model developers greatly expand their compute spend for model training

Hyperscaler AI Opportunity

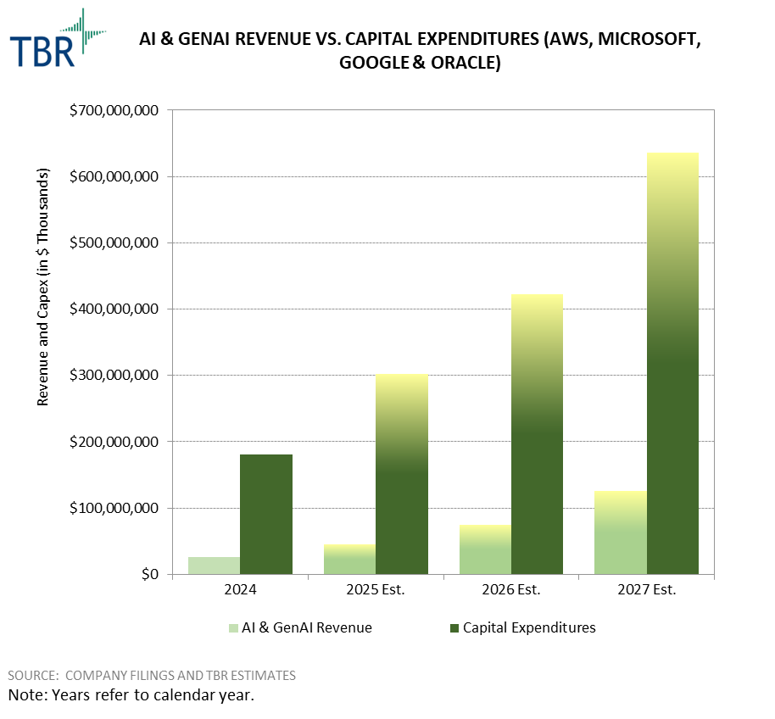

AI revenue recognized by the hyperscalers has been growing at a blistering rate (TBR estimates it will increase 73% year-to-year in 2025), and backlog commentary suggests the current elevated growth trajectory will continue over the coming years. Today, an oversized share of this AI revenue is driven by AI model developers, such as OpenAI and Anthropic, securing massive amounts of infrastructure capacity for current and future training workloads, adding concentration risk to hyperscalers’ backlog. Although financing appears to be secured at this point, the scale of the investments relative to the model developers’ current revenue base compounds the inherent concentration risk and suggests AI usage will scale dramatically over the next several years. Any downward change in sentiment or market volatility could have oversized impacts, pushing recognized revenue to deviate from current backlog projections.

Ultimately, diversifying AI revenue streams will be a major strategic objective for the hyperscalers, pushing them to penetrate deeper into the enterprise inference market. According to TBR’s recent cloud customer survey, over 77% of enterprise respondents reported that AI exceeded their value expectations, suggesting that early experimentation is yielding positive business outcomes. Hyperscalers’ top strategic objectives are to take advantage of this momentum, work with partners to unlock new use cases and continue to build new infrastructure.

As enterprises begin to pursue on-premises and hybrid AI, OEMs deepen roles in advisory, deployment and industry solutions built on NVIDIA AI Enterprise

AI Infrastructure Services Opportunity

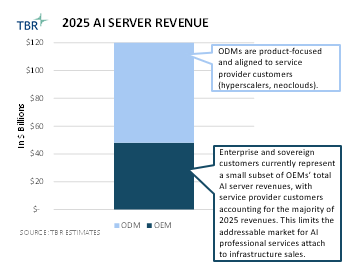

OEMs’ opportunity to participate in agentic AI solutions is closely tied to the slowly but steadily growing segment of enterprises and sovereigns seeking on-premises AI capabilities. This customer group is more likely to invest in a broader range of services from OEMs, including AI advisory and life cycle services, which primarily focus on NVIDIA AI Enterprise and its NVIDIA Blueprints.

TBR expects OEM services engagements centered around AI will be built as extensions of areas where the vendors already have strengths in solution building. These vary to some degree by OEM but largely center on manufacturing, retail, healthcare and financial services. In some cases, such as AI-based edge solutions, OEMs may be able to lead end-to-end engagements with their AI services portfolios. In other cases, OEMs will focus on delivering infrastructure-centric services in support of partnerships, with broader engagements led by systems integrators.

On-premises and hybrid AI adoption will remain in a ramp-up period in 2026. Even with NVIDIA Blueprints as a baseline, most end customers are unlikely to have the skills to deploy these AI solutions in-house. This will lead to services engagements to assist in solution deployment and further expansion of OEMs’ own AI libraries to create more plug-and-play AI solutions for industry use cases.

TBR estimates the potential annual AI-related opportunity for CSPs will reach $170B by 2030, approximately 53% of which is new revenue and 47% is cost efficiencies

CSPs have been largely sidelined from the new revenue opportunity presented by AI since the emergence of GenAI in 4Q22, but this is starting to change, evidenced by significant deals won by Lumen and Zayo to provide transport between data centers for AI workloads. There are also some green shoots of demand for hyperscalers to leverage CSPs’ network facilities (e.g., wire centers and central offices) and other real estate assets to colocate AI infrastructure closer to end users, as evidenced by efforts being made by Verizon and AT&T.

All CSPs that are investing in AI currently expect to reap cost efficiencies from the technology. The new revenue opportunity is more nuanced and is CSP- and market- specific in nature. APAC-based CSPs are likely to be the largest beneficiaries of new revenue from AI due to government protections, stimulus and cultural orientations toward early adoption of emerging technologies.

Technology Business Research, Inc.

Technology Business Research, Inc. Technology Business Research, Inc.

Technology Business Research, Inc. Technology Business Research, Inc. (AI Generated)

Technology Business Research, Inc. (AI Generated)